Cutting incident response time by 68% with an AI-powered observability dashboard

NeuralOps had a powerful AI engine that could detect infrastructure anomalies before they became incidents. What they lacked was an interface that made that intelligence usable. We ran a Discovery Sprint, designed the product from the ground up, and shipped the v1 dashboard in 11 weeks.

reduction in mean time to resolution

trial-to-paid conversion (up from 9%)

daily Slack alerts per engineer

SRE satisfaction score at 90 days

from Discovery Sprint to production

01 / The Problem

NeuralOps' ML pipeline was generating accurate, early-warning signals — but those signals were surfaced through a CLI and a tangle of Slack alerts. Engineering teams were experiencing alert fatigue: an average of 340 Slack notifications per engineer per day, with no way to understand severity, cluster related events, or track resolution progress. Prospects loved the demo, but conversion from trial to paid was stuck at 9% because the product required too much setup expertise to feel immediately valuable. The core challenge was clear: the intelligence existed; the interface to make it legible did not.

02 / Our Approach

We started with a five-day Discovery Sprint embedded with NeuralOps' engineering and customer success teams. We interviewed six engineering managers and four site reliability engineers across trial accounts, and shadowed two on-call rotations to understand how incident response actually worked in practice — not how it was documented. Three findings shaped everything: first, on-call engineers made triage decisions in under 90 seconds, meaning the dashboard had to communicate severity and blast radius at a glance. Second, context collapse was the primary source of cognitive load — the same underlying issue could generate 40+ discrete alerts with no visible relationship between them. Third, teams wanted to involve their wider engineering org in post-mortems but had no sharable artefact to anchor the conversation. We also ran a competitive audit across Datadog, Grafana, and PagerDuty — identifying where density of information became an obstacle rather than an asset.

03 / The Solution

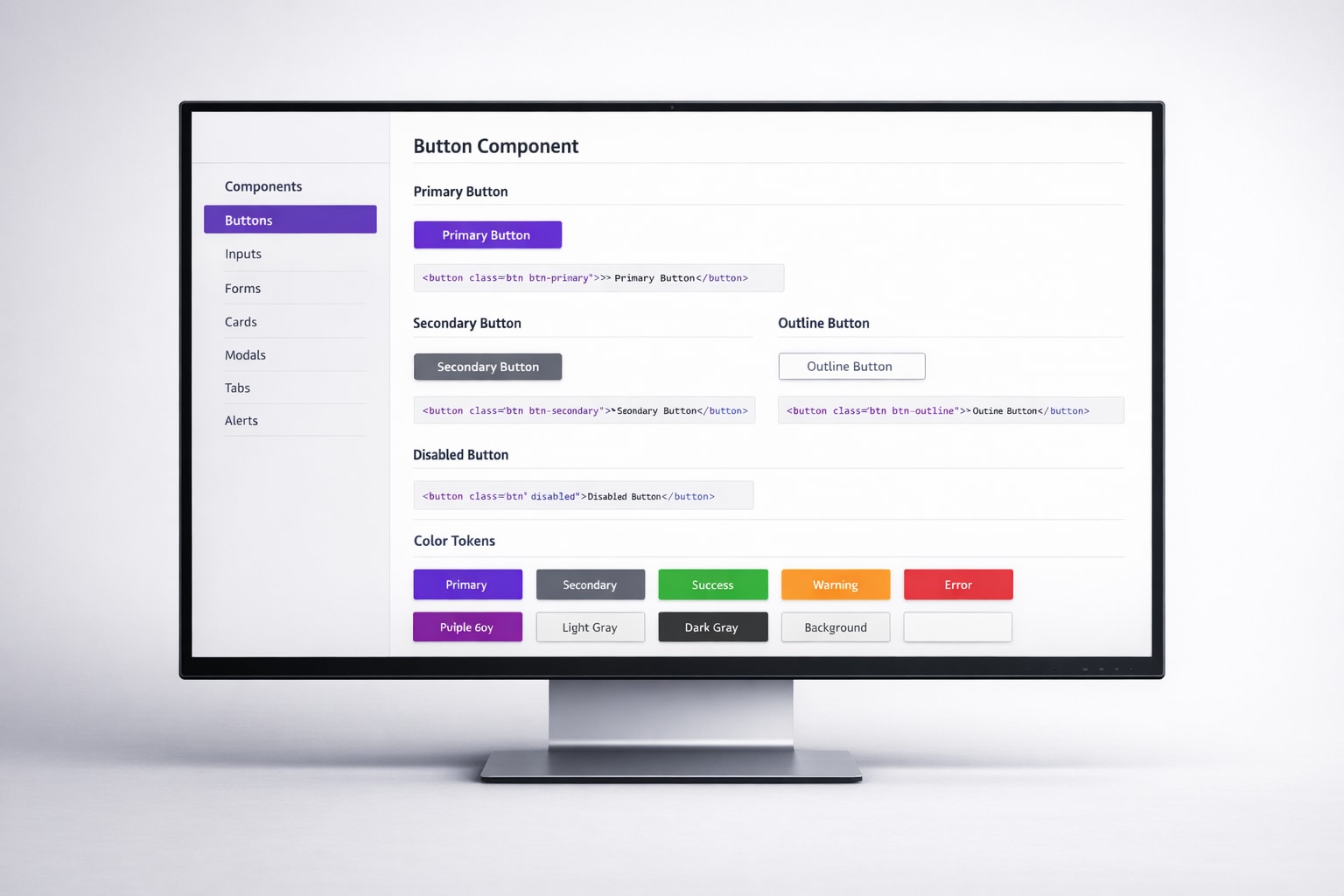

We designed and built a React dashboard that organised monitoring intelligence into three layers: a real-time Incident Command surface that clustered correlated alerts into single incidents with an AI-generated blast radius estimate; a Service Health timeline that gave teams a 72-hour rolling view of every service's stability, with drill-down to the raw signal; and a Post-Mortem builder that auto-populated from incident data and allowed collaborative annotation before export. The visual language was deliberately restrained — a dark-mode interface with a strict four-colour severity system and D3.js-powered flame graphs that communicated impact density without overwhelming on-call engineers at 2am. We instrumented the dashboard with product analytics from day one, running fortnightly review sessions with the NeuralOps team to iterate on interaction patterns against real usage data across a six-week release cadence.

Tech Stack

“We had the model. Leangency gave us the product. Before the dashboard, our best customers were the ones willing to fight through the CLI — now we onboard enterprise teams in an afternoon. The incident clustering alone has changed how our on-call rotations feel.”

What’s next

Ready to build something like this?

Every project starts with a conversation. Book a discovery call and we’ll explore how we can help.